AI Orchestration in Customer Service: Implementation Guide

Customer service breaks first when a company scales fast. Discover how AI orchestration transforms support operations through coordinated systems, automated workflows, and intelligent routing that scales with your business.

Why Customer Service Breaks at Scale — and How Orchestration Fixes It

Customer service breaks first when a company scales fast. Ticket volumes rise, issues become more technical, and traditional support models — more agents or basic chatbots — struggle to keep up. AI orchestration customer service changes this dynamic by introducing a central intelligence layer that connects conversational AI with CRM systems, ticketing platforms, engineering logs, billing tools, and real-time infrastructure data. Instead of simply answering questions, an orchestrated system investigates problems, gathers context across systems, applies business rules, and executes actions automatically.

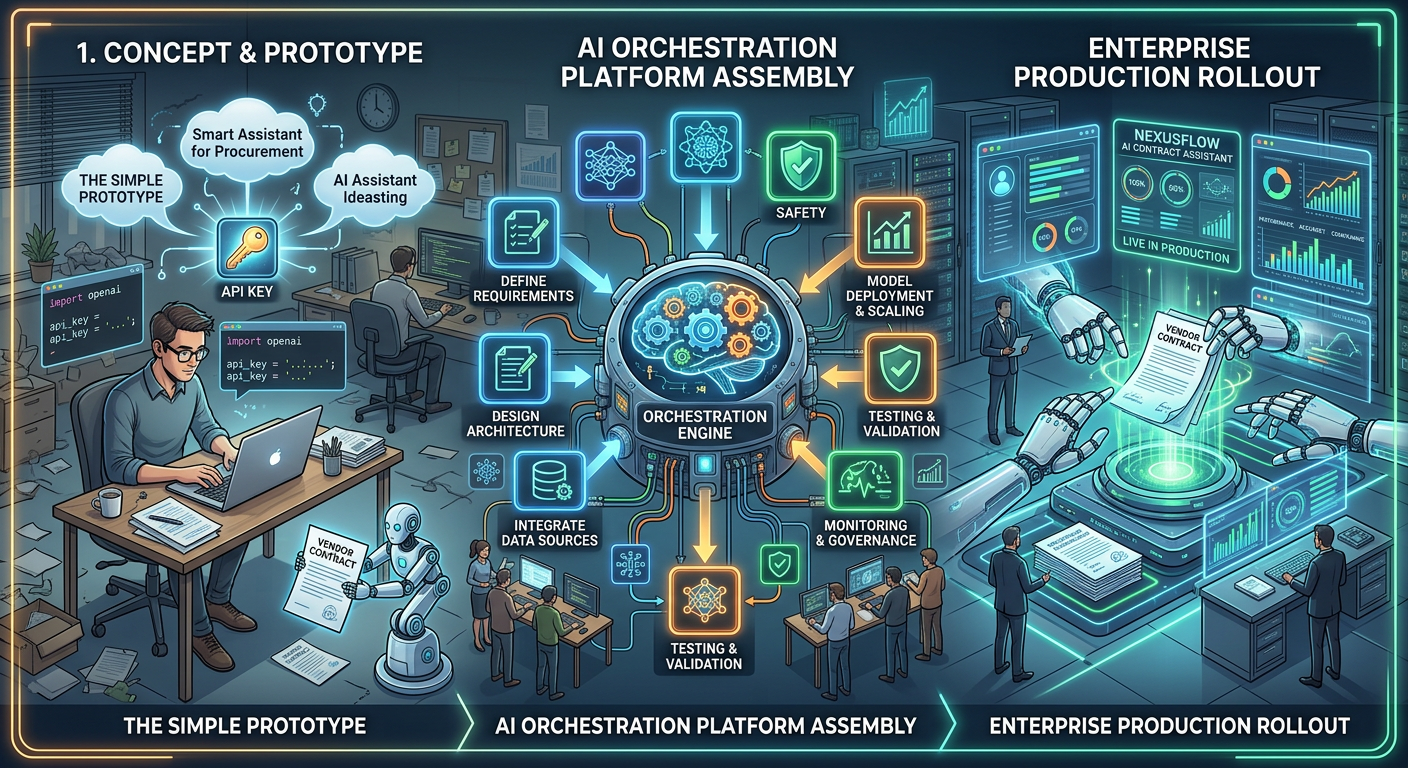

The journey toward enterprise AI usually starts with a false sense of simplicity. A developer grabs an API key from a major language model provider, writes a quick script to pass a user's prompt to that external server, and watches as coherent responses populate the application. It feels like a massive win for a weekend's work. However, once exposed to real users, the cracks appear immediately — the AI confidently hallucinates answers about private company data it cannot access, the API bill doubles every few weeks, and a major enterprise client threatens to leave when their security team discovers sensitive data is being sent to a public AI model. That moment is when formal AI orchestration implementation begins.

Phase 1: Pinning Down the Real Use Case

Before writing any new code, product and engineering leaders must stop and define exactly what they are trying to build. The first mistake is treating generative AI as a magic wand rather than a specific tool for a specific job. The right starting point is a foundational AI orchestration checklist that asks the hard question: are you building an autonomous agent that can take actions — like sending an email or updating a record — or do you need a highly accurate search engine that can read thousands of dense documents?

This clarity is what the engineering team needs. If users simply want a safe, accurate way to search their own historical support files, the system must be heavily optimised for reading, data management, and hallucination prevention — not for building a complex robot that uses external tools. This planning phase turns a vague idea into a strict set of requirements, ensuring that the work involved in implementing AI orchestration is tied directly to a real business problem rather than chasing technology trends.

Before building anything, answer one question: are you building an agent that takes actions, or a search system that retrieves verified facts? The architecture for each is completely different — and choosing wrong costs months of rework.

Phase 2: Drawing the Architecture

With a clear goal, the engineering team moves into architectural design. A successful AI orchestration deployment means avoiding vendor lock-in from the outset. The technology moves too fast — the best model today may be obsolete in six months. The design must be modular.[2]

The core of the new architecture is the orchestration framework itself. A popular open-source option known for ingesting messy data and running highly accurate searches is selected. Next, a vector database is chosen — a specialised database designed to store mathematical representations of text for lightning-speed retrieval. Because the platform handles sensitive corporate data, every client's data is kept strictly separated. Finally, rather than sending text to the public internet for processing, a local embedding model runs on private servers. By treating every component — the base framework, the language model, the data parser — as a separate, interchangeable block, the team ensures flexibility: if a part breaks or gets outdated, it can be swapped without taking down the entire application.

Phase 3: Building Context and Data Pipelines

Taking the architecture from whiteboard to working system is where the real engineering happens. This phase is about building the pipes that connect existing databases to the new AI engine — writing scripts to securely pull old support tickets and contracts from legacy storage, clean up messy text formatting, and chop documents into smaller, readable chunks. These chunks are converted into mathematical vectors and loaded into the secure database.[1]

The most critical piece of engineering is how the user's actual question is handled. When a user types a query, the orchestration layer intercepts it, runs a search through the vector database to find the most relevant paragraphs from that specific user's company history, then builds a massive set of instructions behind the scenes — combining the user's original question with the retrieved background information, and adding strict rules telling the AI exactly how to answer. This process forces the language model to rely only on verifiable facts rather than guessing. Getting this intricate dance of searching and prompt-building right is the biggest technical hurdle before any AI orchestration rollout — it is the exact mechanism that stops an AI from hallucinating and makes it safe for professional use.

Phase 4: Locking Down Security and Governance

In enterprise software, a brilliant feature is completely useless if it fails a security audit. The entire AI orchestration implementation must comply with strict data protection laws — and the orchestration layer is the enforcement point.[4]

The engineering team builds modules that automatically scan text for sensitive information — social security numbers, employee names, financial figures — and redact them before any prompt reaches the language model. The orchestration framework is also tied directly into the existing user permission system. Before the AI even attempts to search the database, the system checks who is asking. If an entry-level buyer asks for the details of the CEO's employment contract, the orchestration layer blocks the search entirely — that user lacks the necessary permissions. By building these security rules directly into the middleware, the platform becomes a tightly controlled, auditable tool that even the most cautious enterprise clients can trust.

Security enforcement must live inside the orchestration middleware — not bolted on as an afterthought. Permission checks, PII redaction, and audit logging must run before any query reaches an AI model.

NIST AI Risk Management Framework [4]Phase 5: Evaluation, Guardrails, and Cost Management

Before real customers touch the system, the team must figure out how to monitor it. Building the data pipelines is only the first step — keeping the system accurate and affordable over time is equally important. Because language models can be unpredictable and change their behaviour over time, automated testing is essential: smaller, cheaper AI models constantly grade the answers coming out of the main system, checking that tone is professional and facts are correct.[3]

Cost management runs in parallel. Semantic routing ensures that when a user asks a simple question — formatting a date or summarising a short paragraph — the system routes the task to a free, open-source model running on the company's own servers. Expensive, premium AI models are reserved only for the hardest, most complex questions. The system also tracks exactly how much money each user is costing in API fees. This focus on cost control and automated testing ensures the new feature neither bankrupts the company nor frustrates users with degrading quality over time.

Phases 6 & 7: Rolling Out and Building for the Long Term

An enterprise-grade AI orchestration rollout is never done all at once — flipping a switch and hoping for the best is a recipe for disaster. The correct approach uses controlled, phased releases.

Quiet release to friendly beta testers

Start with a tiny group of internal users. Eyes on the monitoring dashboards — watch for vector database slowdowns, error spikes, and any signs the system is returning incorrect answers.

Load balancing and stress testing

Carefully balance server loads to ensure a sudden spike in AI usage does not slow down the rest of the platform. Verify that vector databases can handle thousands of simultaneous searches without degrading.

Validate accuracy with beta users

Confirm that answers are actually accurate before expanding. A near-zero hallucination rate and stable response times during peak hours are the metrics that define readiness.

Gradual expansion to full production

As the system proves stability and accuracy, slowly expand the release to more users. Replace the old, fragile API script with the new modular system — without causing disruptions to existing customers.

Build for vendor independence long-term

The modular architecture means no vendor lock-in. As better models emerge, swap them in without rewriting core application code — staying current without starting over every six months.

Organisations that take the time to build a proper orchestration deployment today are building a foundation that will last — protecting themselves from vendor lock-in and giving themselves the power to build smart, secure tools their customers can actually rely on.

Frequently Asked Questions

Q1. What are the main goals of a proper orchestration setup?+

The main goal is to replace direct, fragile connections to AI models with a strong, central management layer. Instead of just passing text back and forth, this middleware securely fetches private data from your databases, builds highly detailed instructions for the AI, enforces user access permissions, and formats the final answer so your software can read it. It takes a basic chatbot concept and turns it into a secure, reliable system capable of complex reasoning.

Q2. How does an AI orchestration deployment actually save money?+

It saves money through intelligent routing. Without orchestration, every user prompt goes to the most expensive AI model on the market, burning through budget quickly. With a proper deployment, the system analyses the incoming question first — routing simple tasks to a highly efficient, essentially free model on your own hardware, and only paying for premium AI models when the user asks a genuinely complex question. Operating costs stay flat even as usage grows.[3]

Q3. What are the most important items on an AI orchestration checklist?+

A solid AI orchestration checklist must cover security, data handling, and monitoring. Verify that your system strips sensitive personal information before sending data to an external model. Confirm your databases respect user permission levels so employees cannot access restricted files. Ensure you have automated systems in place to track API costs, monitor server response times, and test AI answers for accuracy to catch hallucinations before users see them.

Q4. Why is implementing AI orchestration critical for data privacy?+

Sending sensitive corporate or personal data to a public AI company is often a serious violation of privacy laws and security compliance standards. Implementing AI orchestration solves this by keeping data entirely within your own network. You run the search databases and embedding models on your own private, secure infrastructure. The orchestration layer ensures that sensitive context retrieval and data processing happen in an isolated environment — allowing you to pass strict security audits.[4]

Q5. What are the biggest risks during an AI orchestration rollout?+

The biggest risks revolve around system failure under heavy traffic and the AI returning bad information. If vector databases are not set up to handle thousands of simultaneous searches, the entire application can slow down or crash during peak usage. Additionally, if the data ingestion phase chunks text poorly, the AI pulls up irrelevant background information — resulting in confident but completely incorrect answers for your users.[1]

Q6. How do we know if our setup was actually successful?+

Measure success across both system metrics and user behaviour. On the technical side, a successful AI orchestration implementation shows stable server response times during peak hours, lower per-query API costs, and a near-zero error rate. On the user side, success looks like a significant drop in reported hallucinations, higher daily engagement with the new features, and the ability to pass rigorous security reviews required by your largest enterprise customers.

References

All sources verified March 2026. Click any citation to jump to the source.

AI Orchestration in Customer Service: Implementation Guide

Customer service breaks first when a company scales fast. Discover how AI orchestration transforms support operations through coordinated systems, automated workflows, and intelligent routing that scales with your business.

Why Customer Service Breaks at Scale — and How Orchestration Fixes It

Customer service breaks first when a company scales fast. Ticket volumes rise, issues become more technical, and traditional support models — more agents or basic chatbots — struggle to keep up. AI orchestration customer service changes this dynamic by introducing a central intelligence layer that connects conversational AI with CRM systems, ticketing platforms, engineering logs, billing tools, and real-time infrastructure data. Instead of simply answering questions, an orchestrated system investigates problems, gathers context across systems, applies business rules, and executes actions automatically.

The journey toward enterprise AI usually starts with a false sense of simplicity. A developer grabs an API key from a major language model provider, writes a quick script to pass a user's prompt to that external server, and watches as coherent responses populate the application. It feels like a massive win for a weekend's work. However, once exposed to real users, the cracks appear immediately — the AI confidently hallucinates answers about private company data it cannot access, the API bill doubles every few weeks, and a major enterprise client threatens to leave when their security team discovers sensitive data is being sent to a public AI model. That moment is when formal AI orchestration implementation begins.

Phase 1: Pinning Down the Real Use Case

Before writing any new code, product and engineering leaders must stop and define exactly what they are trying to build. The first mistake is treating generative AI as a magic wand rather than a specific tool for a specific job. The right starting point is a foundational AI orchestration checklist that asks the hard question: are you building an autonomous agent that can take actions — like sending an email or updating a record — or do you need a highly accurate search engine that can read thousands of dense documents?

This clarity is what the engineering team needs. If users simply want a safe, accurate way to search their own historical support files, the system must be heavily optimised for reading, data management, and hallucination prevention — not for building a complex robot that uses external tools. This planning phase turns a vague idea into a strict set of requirements, ensuring that the work involved in implementing AI orchestration is tied directly to a real business problem rather than chasing technology trends.

Before building anything, answer one question: are you building an agent that takes actions, or a search system that retrieves verified facts? The architecture for each is completely different — and choosing wrong costs months of rework.

Phase 2: Drawing the Architecture

With a clear goal, the engineering team moves into architectural design. A successful AI orchestration deployment means avoiding vendor lock-in from the outset. The technology moves too fast — the best model today may be obsolete in six months. The design must be modular.[2]

The core of the new architecture is the orchestration framework itself. A popular open-source option known for ingesting messy data and running highly accurate searches is selected. Next, a vector database is chosen — a specialised database designed to store mathematical representations of text for lightning-speed retrieval. Because the platform handles sensitive corporate data, every client's data is kept strictly separated. Finally, rather than sending text to the public internet for processing, a local embedding model runs on private servers. By treating every component — the base framework, the language model, the data parser — as a separate, interchangeable block, the team ensures flexibility: if a part breaks or gets outdated, it can be swapped without taking down the entire application.

Phase 3: Building Context and Data Pipelines

Taking the architecture from whiteboard to working system is where the real engineering happens. This phase is about building the pipes that connect existing databases to the new AI engine — writing scripts to securely pull old support tickets and contracts from legacy storage, clean up messy text formatting, and chop documents into smaller, readable chunks. These chunks are converted into mathematical vectors and loaded into the secure database.[1]

The most critical piece of engineering is how the user's actual question is handled. When a user types a query, the orchestration layer intercepts it, runs a search through the vector database to find the most relevant paragraphs from that specific user's company history, then builds a massive set of instructions behind the scenes — combining the user's original question with the retrieved background information, and adding strict rules telling the AI exactly how to answer. This process forces the language model to rely only on verifiable facts rather than guessing. Getting this intricate dance of searching and prompt-building right is the biggest technical hurdle before any AI orchestration rollout — it is the exact mechanism that stops an AI from hallucinating and makes it safe for professional use.

Phase 4: Locking Down Security and Governance

In enterprise software, a brilliant feature is completely useless if it fails a security audit. The entire AI orchestration implementation must comply with strict data protection laws — and the orchestration layer is the enforcement point.[4]

The engineering team builds modules that automatically scan text for sensitive information — social security numbers, employee names, financial figures — and redact them before any prompt reaches the language model. The orchestration framework is also tied directly into the existing user permission system. Before the AI even attempts to search the database, the system checks who is asking. If an entry-level buyer asks for the details of the CEO's employment contract, the orchestration layer blocks the search entirely — that user lacks the necessary permissions. By building these security rules directly into the middleware, the platform becomes a tightly controlled, auditable tool that even the most cautious enterprise clients can trust.

Security enforcement must live inside the orchestration middleware — not bolted on as an afterthought. Permission checks, PII redaction, and audit logging must run before any query reaches an AI model.

NIST AI Risk Management Framework [4]Phase 5: Evaluation, Guardrails, and Cost Management

Before real customers touch the system, the team must figure out how to monitor it. Building the data pipelines is only the first step — keeping the system accurate and affordable over time is equally important. Because language models can be unpredictable and change their behaviour over time, automated testing is essential: smaller, cheaper AI models constantly grade the answers coming out of the main system, checking that tone is professional and facts are correct.[3]

Cost management runs in parallel. Semantic routing ensures that when a user asks a simple question — formatting a date or summarising a short paragraph — the system routes the task to a free, open-source model running on the company's own servers. Expensive, premium AI models are reserved only for the hardest, most complex questions. The system also tracks exactly how much money each user is costing in API fees. This focus on cost control and automated testing ensures the new feature neither bankrupts the company nor frustrates users with degrading quality over time.

Phases 6 & 7: Rolling Out and Building for the Long Term

An enterprise-grade AI orchestration rollout is never done all at once — flipping a switch and hoping for the best is a recipe for disaster. The correct approach uses controlled, phased releases.

Quiet release to friendly beta testers

Start with a tiny group of internal users. Eyes on the monitoring dashboards — watch for vector database slowdowns, error spikes, and any signs the system is returning incorrect answers.

Load balancing and stress testing

Carefully balance server loads to ensure a sudden spike in AI usage does not slow down the rest of the platform. Verify that vector databases can handle thousands of simultaneous searches without degrading.

Validate accuracy with beta users

Confirm that answers are actually accurate before expanding. A near-zero hallucination rate and stable response times during peak hours are the metrics that define readiness.

Gradual expansion to full production

As the system proves stability and accuracy, slowly expand the release to more users. Replace the old, fragile API script with the new modular system — without causing disruptions to existing customers.

Build for vendor independence long-term

The modular architecture means no vendor lock-in. As better models emerge, swap them in without rewriting core application code — staying current without starting over every six months.

Organisations that take the time to build a proper orchestration deployment today are building a foundation that will last — protecting themselves from vendor lock-in and giving themselves the power to build smart, secure tools their customers can actually rely on.

Frequently Asked Questions

Q1. What are the main goals of a proper orchestration setup?+

The main goal is to replace direct, fragile connections to AI models with a strong, central management layer. Instead of just passing text back and forth, this middleware securely fetches private data from your databases, builds highly detailed instructions for the AI, enforces user access permissions, and formats the final answer so your software can read it. It takes a basic chatbot concept and turns it into a secure, reliable system capable of complex reasoning.

Q2. How does an AI orchestration deployment actually save money?+

It saves money through intelligent routing. Without orchestration, every user prompt goes to the most expensive AI model on the market, burning through budget quickly. With a proper deployment, the system analyses the incoming question first — routing simple tasks to a highly efficient, essentially free model on your own hardware, and only paying for premium AI models when the user asks a genuinely complex question. Operating costs stay flat even as usage grows.[3]

Q3. What are the most important items on an AI orchestration checklist?+

A solid AI orchestration checklist must cover security, data handling, and monitoring. Verify that your system strips sensitive personal information before sending data to an external model. Confirm your databases respect user permission levels so employees cannot access restricted files. Ensure you have automated systems in place to track API costs, monitor server response times, and test AI answers for accuracy to catch hallucinations before users see them.

Q4. Why is implementing AI orchestration critical for data privacy?+

Sending sensitive corporate or personal data to a public AI company is often a serious violation of privacy laws and security compliance standards. Implementing AI orchestration solves this by keeping data entirely within your own network. You run the search databases and embedding models on your own private, secure infrastructure. The orchestration layer ensures that sensitive context retrieval and data processing happen in an isolated environment — allowing you to pass strict security audits.[4]

Q5. What are the biggest risks during an AI orchestration rollout?+

The biggest risks revolve around system failure under heavy traffic and the AI returning bad information. If vector databases are not set up to handle thousands of simultaneous searches, the entire application can slow down or crash during peak usage. Additionally, if the data ingestion phase chunks text poorly, the AI pulls up irrelevant background information — resulting in confident but completely incorrect answers for your users.[1]

Q6. How do we know if our setup was actually successful?+

Measure success across both system metrics and user behaviour. On the technical side, a successful AI orchestration implementation shows stable server response times during peak hours, lower per-query API costs, and a near-zero error rate. On the user side, success looks like a significant drop in reported hallucinations, higher daily engagement with the new features, and the ability to pass rigorous security reviews required by your largest enterprise customers.

References

All sources verified March 2026. Click any citation to jump to the source.

AI Orchestration Implementation Checklist: From Planning to Production