AI Orchestration Security: Complete Enterprise Governance Guide

Table of Contents

- Introduction: From Deployment to Governance

- The Enterprise Wake-Up Call

- What Is AI Orchestration Security?

- The Four Pillars of AI Orchestration Governance

- Building AI Orchestration Guardrails

- AI Orchestration Compliance in Regulated Environments

- Designing Secure AI Orchestration Architecture

- Common Failure Patterns

- Measuring Security Effectiveness

- Change Management and Cultural Alignment

- The Strategic Advantage of Secure Orchestration

- Conclusion

- Frequently Asked Questions

When software companies rush to add AI to their products, things can quickly turn into a messy, expensive free-for-all where sensitive customer data might accidentally slip out. The fix for this headache is AI orchestration security, which basically acts like a smart, centralized traffic cop for all your company's AI requests. Instead of letting every part of your software talk directly to outside AI models, this central hub steps in to automatically scrub away private details like names or credit card numbers before the data ever leaves your servers. It also makes sure only the right employees can use certain AI tools, and it double-checks the AI's answers for mistakes or bad advice before showing them to the user. By setting up this secure middleman, your developers can build cool new features without stressing over security, and your sales team can easily show big, cautious clients that their private data is in perfectly safe hands. [1]

In the last few years, enterprise software has undergone a profound structural shift. Artificial intelligence is no longer an experimental sandbox initiative isolated in an innovation lab; it is deeply embedded into the core operations of the modern business. Today, AI powers customer support workflows, underwriting engines, sales copilots, financial reconciliation systems, fraud detection pipelines, and executive operational dashboards. As a result, foundational models are being called thousands and sometimes millions of times per day across a single organization. However, as these intelligent systems scale to meet demand, a critical realization emerges inside enterprise technology teams: deploying AI is relatively easy; governing it is exceptionally difficult.

Consider the trajectory of a mid-sized technology company that recently built a highly capable AI assistant intended for internal operations. Initially, the tool was a massive success. It expertly summarized complex vendor contracts, drafted nuanced renewal emails, and analyzed upcoming revenue risks with remarkable accuracy. Naturally, within months, its usage organically expanded across various departments. But behind the scenes, architectural cracks began to show. There were absolutely no centralized controls governing which specific models processed internal data. Furthermore, no audit trails existed to track who was changing the prompt instructions. Most alarmingly, sensitive customer information occasionally flowed into external, third-party model APIs without any formal security review or data processing agreement validation. While nothing catastrophic happened immediately, leadership began asking the right, albeit uncomfortable, questions.

That question marks the true beginning of operational maturity. AI systems do not simply require basic performance monitoring; they require structured, systemic oversight. They need absolute policy enforcement before, during, and after execution. They need robust guardrails that actively prevent misuse, algorithmic drift, and sensitive data exposure. Furthermore, they require centralized logging, rigid approval workflows, and clear accountability mapped to human owners. This is exactly where AI orchestration security becomes foundational. It is not merely an optional security layer, or an afterthought bolted onto existing AI applications. Rather, it is the fundamental architecture that ensures enterprise intelligence operates safely, predictably, and in strict alignment with corporate policy. In this guide, we will deeply explore how enterprise teams can build a structured framework around AI orchestration security, seamlessly integrating governance, compliance, and operational control into every single layer of the orchestration stack.

The Enterprise Wake-Up Call: Why Security Comes After Scale

Many organizations begin their AI journey with a singular, overriding focus on feature velocity. Product teams are heavily incentivized to ship intelligent summarization tools as quickly as possible to wow users. Simultaneously, engineering departments rush to embed sophisticated recommendation engines, while data teams deploy predictive models directly into production. The early wins are undeniably exciting and often drive significant momentum. But as adoption increases and AI becomes decentralized across the company, the architectural complexity compounds at an alarming rate. [2]

Different teams begin to integrate models using entirely different patterns and libraries. Core prompt logic, which dictates how the AI behaves, is duplicated across dozens of microservices, making global updates nearly impossible. More critically, sensitive information begins flowing through completely disparate AI endpoints without any centralized security review or data masking. Consequently, telemetry and logs are fragmented or entirely incomplete. When compliance officers inevitably ask for traceability regarding how an automated decision was made, the engineering response usually involves days of manual reconstruction and log diving. This is not due to negligence on the part of the developers—it is an architectural inevitability when scaling without a plan.

What Is AI Orchestration Security?

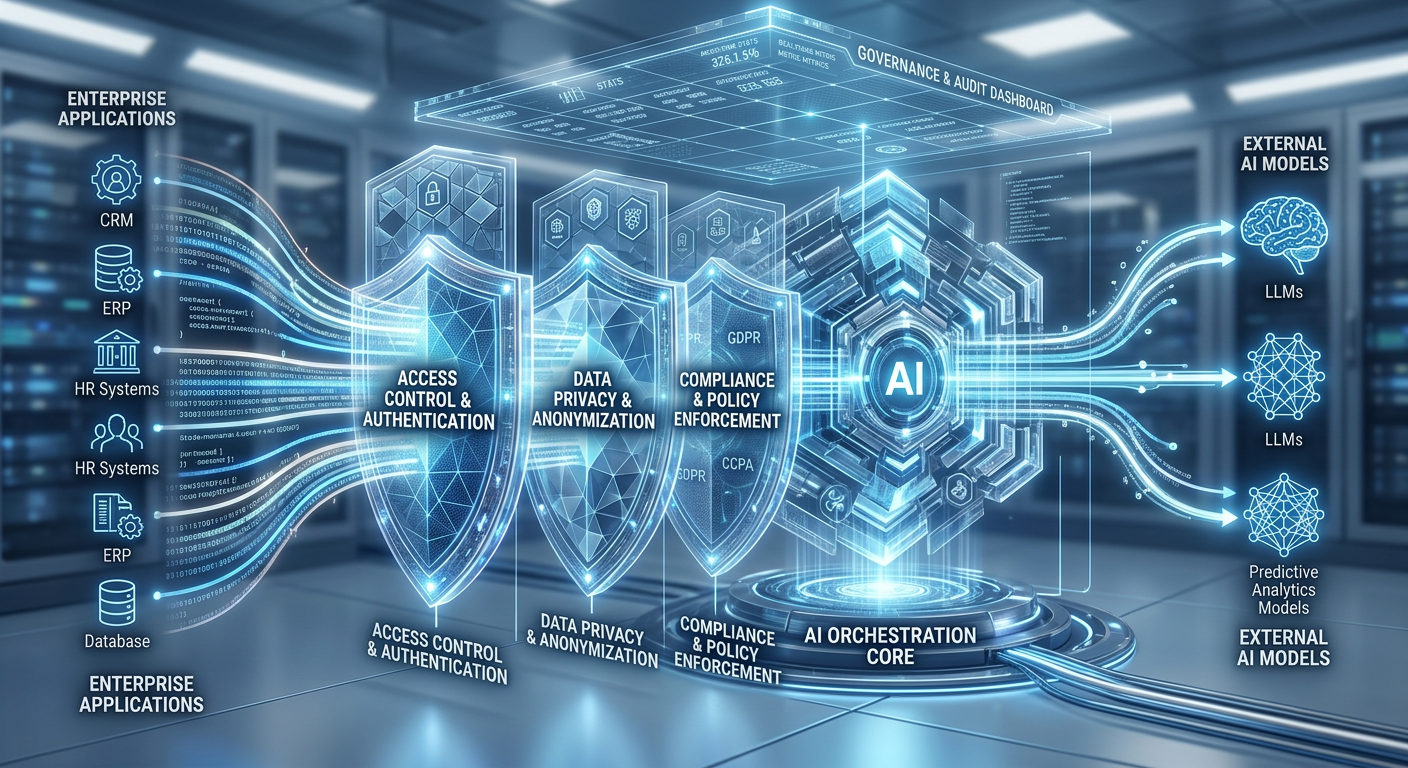

At its core, AI orchestration security refers to the practice of embedding protection, strict policy enforcement, and comprehensive observability directly into the orchestration layer that coordinates your AI models and workflows. Instead of allowing various frontend applications or backend microservices to call AI model APIs directly, organizations introduce a dedicated, central orchestration service. This service effectively becomes the intelligent control plane sitting firmly between frontend applications, backend enterprise data stores, and the external or internal model providers.

Within that centralized control plane, a highly structured set of security operations occurs automatically. First, model access is strictly restricted by organizational policy, ensuring that only authorized services can invoke specific, approved models. Next, all incoming data is rigorously validated and sanitized to strip out sensitive information before any processing happens. Additionally, the prompt templates that guide the AI are version-controlled and require formal approval, treating prompt engineering with the same rigor as software engineering. Once the model returns a response, those outputs are immediately validated against predefined schemas and safety constraints to prevent hallucinations or toxic content from reaching the user. Finally, comprehensive logs instantly capture the full decision lineage, creating a perfect forensic trail. This is not simply security applied at the infrastructure network level. It is deep architectural oversight applied to intelligence itself. Without this orchestration, AI remains reactive, fragmented, and vulnerable. With secure orchestration, AI becomes entirely controllable, predictable, and auditable.

Secure Your AI Operations

Hundred Solutions helps enterprise companies implement robust AI orchestration security frameworks. Get expert guidance on governance, compliance, guardrails, and policy enforcement for your AI infrastructure.

Schedule Your Security Assessment →The Four Pillars of AI Orchestration Governance

- Policy as Code: Security policies must be entirely embedded into the orchestration layer through a concept known as Policy as Code. Instead of relying on static compliance documentation or wiki pages that developers might ignore, teams define explicit rules in code that automatically enforce data handling standards at runtime. For example, a coded policy might dictate that certain personally identifiable information (PII) may never be transmitted to external, cloud-based providers under any circumstances. Another policy might ensure that regional data originating in Europe is strictly restricted to specific model endpoints hosted within the EU to comply with GDPR. Crucially, these policies must be executed automatically and deterministically before any model call occurs, acting as an impassable gateway. [3]

- Role-Based Access Control: Simultaneously, the system must enforce rigorous Role-Based Access Control. It is a fundamental truth that not every user, application, or service should have equal AI capabilities. The central orchestration layer should inherently enforce strict tenant isolation, environment segmentation between staging and production, and granular role-based permissions. By embedding access control directly into the orchestration hub, enterprises transform AI from a loosely connected, risky feature into a tightly governed, enterprise-grade system.

- Observability and Auditability: Furthermore, a mature system relies heavily on Observability and Auditability. This means the orchestrator meticulously logs every single orchestration event to create an unbroken chain of custody. The system must record exactly who or what service initiated the request, precisely which model was selected by the routing engine, and the exact version of the prompt template that was used. Without this level of granular observability, regulatory compliance becomes practically impossible. With centralized logging, AI orchestration compliance becomes a verifiable, mathematical reality rather than an aspirational goal.

- Lifecycle and Change Management: Finally, organizations must implement strict Lifecycle and Change Management. The reality of modern AI is that prompt logic changes frequently, underlying foundational models are constantly upgraded or deprecated, and the routing logic determining which model to use evolves continuously. Therefore, every single change to this ecosystem must be meticulously controlled and reviewable via pull requests. Formal approval workflows must exist, especially for modifying prompts that process regulated or highly sensitive enterprise data.

Building AI Orchestration Guardrails into the Workflow

Guardrails in the context of AI are not simply static, one-time filters; they are highly dynamic, intelligent enforcement mechanisms deeply embedded throughout the entire lifecycle of the orchestration pipeline. To be truly effective, enterprise AI orchestration guardrails must operate flawlessly across three distinct stages of the data journey.

Before a foundational model receives any data whatsoever, the orchestration layer must deploy robust Input Guardrails. During this initial phase, the system should automatically scan the incoming payload to detect and cleanly redact any sensitive information, such as credit card numbers or social security details. It must deeply validate the structural schema to ensure the request is properly formatted and won't cause processing errors. The orchestrator must also confirm tenant authorization, ensuring the requesting user has the right to perform the action. Finally, it must enforce stringent data residency constraints, effectively geofencing data so it does not cross restricted international borders. This immediate intervention prevents unintended data exposure before the AI processing even begins.

Once the input is cleared, the system transitions to Processing Guardrails. During the actual execution phase, the orchestrator must ensure that only explicitly approved models are utilized for specific, mapped tasks—preventing a developer from accidentally sending a simple text classification task to a highly expensive, unapproved reasoning model. The system must also ensure that API rate limits are strictly respected to prevent catastrophic cost overruns or distributed denial-of-service scenarios. Additionally, any context retrieval sources such as vector databases pulling internal documents must be validated to ensure the AI isn't reading files the user doesn't have clearance to see. Should a model provider experience an outage, dynamic fallback logic must activate instantly to route the request to a secure backup model. These active controls drastically reduce both operational downtime and severe compliance risks.

Finally, after the intelligence is generated, the system must engage Output Guardrails. Model outputs cannot be blindly trusted; they must be actively validated for toxicity, bias, or brand-damaging language. The system must also ensure schema correctness, guaranteeing that if a downstream microservice expects a perfectly formatted JSON object, the AI hasn't responded with a conversational paragraph. Furthermore, the output must be scanned to prevent the accidental disclosure of restricted internal information that the model might have hallucinated or inadvertently recalled. By enforcing strict confidence thresholds, post-processing validation ensures that all AI-driven decisions actively meet the enterprise's highest safety standards before ever reaching an end-user or triggering an automated business action. [4]

AI Orchestration Compliance in Regulated Environments

For massive enterprises operating within highly sensitive sectors such as finance, healthcare, insurance, or defense, AI orchestration compliance is entirely non-negotiable. Regulators are no longer accepting "the AI acts like a black box" as a valid legal defense. Modern compliance frameworks increasingly require organizations to maintain absolute data lineage traceability, proving exactly where data originated and how it was manipulated. They demand clear, undeniable human accountability for any automated decisions that affect consumers or patients. Furthermore, regulators expect comprehensive documentation of all model usage alongside rigorous, proactive risk assessment procedures to identify potential algorithmic biases.

Implementing a secure orchestration layer radically simplifies these daunting compliance audits by centralizing all decision logs into a single pane of glass. Instead of forcing an exhausted compliance team to manually trace erratic AI usage across dozens of disconnected microservices and product silos, auditors can simply inspect one unified control plane. They can instantly see the exact lifecycle of a decision from user input to model output. This centralization not only dramatically reduces audit complexity and saves hundreds of hours of engineering time, but it also fundamentally strengthens institutional trust with regulatory bodies and enterprise clients alike.

Designing Secure AI Orchestration Architecture

Architecturally speaking, achieving secure AI orchestration requires highly disciplined, modular layering. You cannot simply hardcode API keys into a web application and call it a day. A mature, enterprise-grade design cleanly separates the ecosystem into distinct, specialized zones.

At the very top is the Application Layer, where the end-users and client interfaces live. Below is the Orchestration Control Plane, which acts as the grand traffic controller and definitive security gateway. Beneath the orchestrator is the Model Execution Layer, housing the actual LLMs and predictive engines, alongside the Data Retrieval Layer, which manages vector databases and enterprise search. Running parallel to all of this is the Policy Engine, constantly evaluating rules, and the Observability and Logging Stack, which records every millisecond of activity.

In this architecture, frontend applications never, under any circumstances, call AI models directly. Every single invocation must pass through the Orchestration Control Plane. This strict physical and logical separation ensures that corporate governance cannot be bypassed—either accidentally or maliciously—by well-meaning product teams who might try embedding model calls directly into their specific frontend services. By design, the orchestration layer becomes the absolute, only legal pathway for AI execution within the entire corporate network.

Common Failure Patterns in AI Orchestration Security

Despite best intentions, enterprise technology teams often encounter highly predictable pitfalls when rolling out AI. One of the most common and painful mistakes is attempting to retrofit security architectures after AI features have already scaled massively in production. Trying to put the genie back in the bottle leads to heavily duplicated prompt logic, inconsistent security controls, and a frustrating developer experience where code breaks unpredictably.

Another frequent failure pattern is an overreliance on external, provider-level safety filters. Assuming that a vendor's default moderation endpoint will protect your highly specific corporate data is incredibly dangerous; external model providers cannot possibly enforce your organization-specific PII policies or regional compliance laws. True governance must live firmly inside your own architecture.

A third, often fatal, mistake is completely ignoring internal misuse. While external hackers are a threat, insider risk—such as employees using misconfigured prompts, gaining inappropriate data access through an AI assistant, or engaging in unauthorized shadow experimentation—can be just as dangerous, if not more so. Strong, holistic AI orchestration governance inherently addresses both sophisticated external threats and subtle internal vulnerabilities simultaneously.

Measuring AI Orchestration Security Effectiveness

In the enterprise, if a security protocol cannot be quantified, it practically does not exist. Security must be highly measurable. Enterprise teams should actively monitor a specific set of telemetry to ensure their orchestration layer is functioning properly. They must track model invocation logs meticulously, breaking them down by specific tenants, business units, and geographic regions to spot anomalies. They should monitor the exact rate of policy enforcement triggers to understand how often users are attempting restricted actions.

Furthermore, tracking output validation failures is critical to determine if a specific model is beginning to hallucinate or degrade quality over time. Teams must also audit how frequently prompt version changes occur to maintain strict quality control over the system's core instructions. Finally, tracking incident response time for AI-specific security alerts is vital. By extensively instrumenting these precise metrics, technical leadership gains crystal-clear visibility into the real-time health of their AI orchestration security posture. These metrics ultimately transform governance from a theoretical compliance exercise into a hardened operational reality.

Change Management and Cultural Alignment

Deploying a robust security architecture is only half the battle; organizational psychology and cultural alignment are equally important. You cannot force a new operational paradigm onto an engineering organization without achieving buy-in. Product teams must deeply understand exactly why centralized orchestration is a mandatory requirement, rather than viewing it as red tape. Engineering teams must willingly adopt shared primitives rather than building rogue, shadow infrastructure. Furthermore, compliance and legal teams must actively collaborate with platform engineering teams incredibly early in the software development lifecycle, rather than waiting until the day before a launch.

To achieve this, internal training and platform documentation should clearly and empathetically communicate the bigger picture. Leadership must explain precisely why direct, hardcoded model calls are strictly prohibited to protect the company. They must demonstrate how automated guardrails actually protect the business and reduce developers' anxiety. Finally, they should show how pristine audit logs directly support winning massive enterprise customer deals by proving security competency. When cross-functional teams truly understand the financial and protective rationale behind secure AI orchestration, cultural resistance drops and platform adoption accelerates dramatically.

The Strategic Advantage of Secure Orchestration

Enterprises that successfully master AI orchestration security do vastly more than simply mitigate downside risk—they accelerate their pace of innovation safely. Because security controls are centralized in one intelligent gateway, the burden is lifted from individual developers. Consequently, new AI-powered product features can ship much faster, as teams no longer must reinvent data masking algorithms for every new microservice.

Furthermore, because the orchestration layer abstracts the underlying AI, external model providers can be swapped out instantly without rewriting millions of lines of application logic. As a result, enterprise customers gain immense confidence in the company's compliance posture, heavily accelerating B2B sales cycles. Lengthy enterprise security reviews become highly streamlined because the architecture is intentionally designed for transparency. Ultimately, security, when correctly embedded into the orchestration layer, becomes a massive business enabler—not a bureaucratic blocker. Over time, this level of governance maturity becomes a formidable competitive differentiator. Software buyers increasingly ask how a vendor's AI is systematically controlled, not just what parlor tricks it can perform. Organizations that can confidently demonstrate structured, architectural oversight earn trust faster and win larger contracts in enterprise markets. [5]

Conclusion

Deploying artificial intelligence without structured, systemic governance introduces silent, compounding risk into the enterprise ecosystem. As intelligent models scale relentlessly across operational workflows, enterprises must urgently shift their mindset from isolated experimentation to architectural control. AI orchestration security provides exactly that necessary control. It elegantly centralizes policy enforcement, standardizes dynamic model routing, embeds unbreakable guardrails deeply into daily workflows, and enables flawless auditability across the entire intelligence stack. By natively integrating governance into the orchestration layer itself, organizations successfully transform AI from a collection of scattered, risky integrations into a highly secure, resilient, and fully enterprise-ready capability. The future of intelligent enterprise systems will not be defined solely by the raw sophistication of the underlying models but rather by the undeniable strength, security, and elegance of the governance frameworks that control them.

Frequently Asked Questions

1. What is AI orchestration security in simple terms?

AI orchestration security is the architectural practice of natively embedding governance, strict policy enforcement, and complete observability into the central orchestration layer that actively manages your AI models and workflows. It acts as an intelligent proxy, ensuring that artificial intelligence operates safely, predictably, and strictly in compliance with all internal enterprise standards and external regulations.

2. How does AI orchestration governance differ from traditional IT governance?

While traditional IT governance focuses on managing server infrastructure, network access, and standard database security, AI orchestration governance is entirely unique. It focuses specifically on the nuanced control of dynamic model invocations, the versioning of natural language prompt logic, the contextual flow of unstructured data into third-party LLMs, and the semantic validation of non-deterministic AI outputs.

3. Why is AI orchestration compliance important for enterprises?

AI orchestration compliance fundamentally ensures that a company's model usage, sensitive data handling, and automated decision-making processes strictly meet rigorous regulatory standards and audit requirements. Without a centralized orchestration gateway, attempting to prove compliance across dozens of fragmented microservices becomes a chaotic, highly risky, and nearly impossible endeavor.

4. What are AI orchestration guardrails?

AI orchestration guardrails are automated, dynamic enforcement mechanisms deeply embedded into the lifecycle of an AI request. They operate before, during, and immediately after a model executes to scan and validate inputs, restrict processing paths based on user roles, and rigorously validate the generated outputs according to strict, pre-coded enterprise safety policies.

5. Can small organizations implement secure AI orchestration?

Yes. Even early-stage startups and smaller technical teams can and should introduce a lightweight orchestration control plane early on. By centralizing model routing and basic logging from day one, smaller teams avoid accumulating massive architectural technical debt, allowing their governance maturity to scale seamlessly alongside their user base.

6. How often should orchestration policies be reviewed?

Security policies in the AI space should be reviewed continuously, especially alongside new foundational model upgrades, shifts in international regulatory laws, and internal product feature expansions. However, conducting formal, comprehensive governance reviews on a quarterly basis serves as a highly effective and standard baseline for modern enterprise technology teams.

AI Orchestration Security: Complete Enterprise Governance Guide

Table of Contents

- Introduction: From Deployment to Governance

- The Enterprise Wake-Up Call

- What Is AI Orchestration Security?

- The Four Pillars of AI Orchestration Governance

- Building AI Orchestration Guardrails

- AI Orchestration Compliance in Regulated Environments

- Designing Secure AI Orchestration Architecture

- Common Failure Patterns

- Measuring Security Effectiveness

- Change Management and Cultural Alignment

- The Strategic Advantage of Secure Orchestration

- Conclusion

- Frequently Asked Questions

When software companies rush to add AI to their products, things can quickly turn into a messy, expensive free-for-all where sensitive customer data might accidentally slip out. The fix for this headache is AI orchestration security, which basically acts like a smart, centralized traffic cop for all your company's AI requests. Instead of letting every part of your software talk directly to outside AI models, this central hub steps in to automatically scrub away private details like names or credit card numbers before the data ever leaves your servers. It also makes sure only the right employees can use certain AI tools, and it double-checks the AI's answers for mistakes or bad advice before showing them to the user. By setting up this secure middleman, your developers can build cool new features without stressing over security, and your sales team can easily show big, cautious clients that their private data is in perfectly safe hands. [1]

In the last few years, enterprise software has undergone a profound structural shift. Artificial intelligence is no longer an experimental sandbox initiative isolated in an innovation lab; it is deeply embedded into the core operations of the modern business. Today, AI powers customer support workflows, underwriting engines, sales copilots, financial reconciliation systems, fraud detection pipelines, and executive operational dashboards. As a result, foundational models are being called thousands and sometimes millions of times per day across a single organization. However, as these intelligent systems scale to meet demand, a critical realization emerges inside enterprise technology teams: deploying AI is relatively easy; governing it is exceptionally difficult.

Consider the trajectory of a mid-sized technology company that recently built a highly capable AI assistant intended for internal operations. Initially, the tool was a massive success. It expertly summarized complex vendor contracts, drafted nuanced renewal emails, and analyzed upcoming revenue risks with remarkable accuracy. Naturally, within months, its usage organically expanded across various departments. But behind the scenes, architectural cracks began to show. There were absolutely no centralized controls governing which specific models processed internal data. Furthermore, no audit trails existed to track who was changing the prompt instructions. Most alarmingly, sensitive customer information occasionally flowed into external, third-party model APIs without any formal security review or data processing agreement validation. While nothing catastrophic happened immediately, leadership began asking the right, albeit uncomfortable, questions.

That question marks the true beginning of operational maturity. AI systems do not simply require basic performance monitoring; they require structured, systemic oversight. They need absolute policy enforcement before, during, and after execution. They need robust guardrails that actively prevent misuse, algorithmic drift, and sensitive data exposure. Furthermore, they require centralized logging, rigid approval workflows, and clear accountability mapped to human owners. This is exactly where AI orchestration security becomes foundational. It is not merely an optional security layer, or an afterthought bolted onto existing AI applications. Rather, it is the fundamental architecture that ensures enterprise intelligence operates safely, predictably, and in strict alignment with corporate policy. In this guide, we will deeply explore how enterprise teams can build a structured framework around AI orchestration security, seamlessly integrating governance, compliance, and operational control into every single layer of the orchestration stack.

The Enterprise Wake-Up Call: Why Security Comes After Scale

Many organizations begin their AI journey with a singular, overriding focus on feature velocity. Product teams are heavily incentivized to ship intelligent summarization tools as quickly as possible to wow users. Simultaneously, engineering departments rush to embed sophisticated recommendation engines, while data teams deploy predictive models directly into production. The early wins are undeniably exciting and often drive significant momentum. But as adoption increases and AI becomes decentralized across the company, the architectural complexity compounds at an alarming rate. [2]

Different teams begin to integrate models using entirely different patterns and libraries. Core prompt logic, which dictates how the AI behaves, is duplicated across dozens of microservices, making global updates nearly impossible. More critically, sensitive information begins flowing through completely disparate AI endpoints without any centralized security review or data masking. Consequently, telemetry and logs are fragmented or entirely incomplete. When compliance officers inevitably ask for traceability regarding how an automated decision was made, the engineering response usually involves days of manual reconstruction and log diving. This is not due to negligence on the part of the developers—it is an architectural inevitability when scaling without a plan.

What Is AI Orchestration Security?

At its core, AI orchestration security refers to the practice of embedding protection, strict policy enforcement, and comprehensive observability directly into the orchestration layer that coordinates your AI models and workflows. Instead of allowing various frontend applications or backend microservices to call AI model APIs directly, organizations introduce a dedicated, central orchestration service. This service effectively becomes the intelligent control plane sitting firmly between frontend applications, backend enterprise data stores, and the external or internal model providers.

Within that centralized control plane, a highly structured set of security operations occurs automatically. First, model access is strictly restricted by organizational policy, ensuring that only authorized services can invoke specific, approved models. Next, all incoming data is rigorously validated and sanitized to strip out sensitive information before any processing happens. Additionally, the prompt templates that guide the AI are version-controlled and require formal approval, treating prompt engineering with the same rigor as software engineering. Once the model returns a response, those outputs are immediately validated against predefined schemas and safety constraints to prevent hallucinations or toxic content from reaching the user. Finally, comprehensive logs instantly capture the full decision lineage, creating a perfect forensic trail. This is not simply security applied at the infrastructure network level. It is deep architectural oversight applied to intelligence itself. Without this orchestration, AI remains reactive, fragmented, and vulnerable. With secure orchestration, AI becomes entirely controllable, predictable, and auditable.

Secure Your AI Operations

Hundred Solutions helps enterprise companies implement robust AI orchestration security frameworks. Get expert guidance on governance, compliance, guardrails, and policy enforcement for your AI infrastructure.

Schedule Your Security Assessment →The Four Pillars of AI Orchestration Governance

- Policy as Code: Security policies must be entirely embedded into the orchestration layer through a concept known as Policy as Code. Instead of relying on static compliance documentation or wiki pages that developers might ignore, teams define explicit rules in code that automatically enforce data handling standards at runtime. For example, a coded policy might dictate that certain personally identifiable information (PII) may never be transmitted to external, cloud-based providers under any circumstances. Another policy might ensure that regional data originating in Europe is strictly restricted to specific model endpoints hosted within the EU to comply with GDPR. Crucially, these policies must be executed automatically and deterministically before any model call occurs, acting as an impassable gateway. [3]

- Role-Based Access Control: Simultaneously, the system must enforce rigorous Role-Based Access Control. It is a fundamental truth that not every user, application, or service should have equal AI capabilities. The central orchestration layer should inherently enforce strict tenant isolation, environment segmentation between staging and production, and granular role-based permissions. By embedding access control directly into the orchestration hub, enterprises transform AI from a loosely connected, risky feature into a tightly governed, enterprise-grade system.

- Observability and Auditability: Furthermore, a mature system relies heavily on Observability and Auditability. This means the orchestrator meticulously logs every single orchestration event to create an unbroken chain of custody. The system must record exactly who or what service initiated the request, precisely which model was selected by the routing engine, and the exact version of the prompt template that was used. Without this level of granular observability, regulatory compliance becomes practically impossible. With centralized logging, AI orchestration compliance becomes a verifiable, mathematical reality rather than an aspirational goal.

- Lifecycle and Change Management: Finally, organizations must implement strict Lifecycle and Change Management. The reality of modern AI is that prompt logic changes frequently, underlying foundational models are constantly upgraded or deprecated, and the routing logic determining which model to use evolves continuously. Therefore, every single change to this ecosystem must be meticulously controlled and reviewable via pull requests. Formal approval workflows must exist, especially for modifying prompts that process regulated or highly sensitive enterprise data.

Building AI Orchestration Guardrails into the Workflow

Guardrails in the context of AI are not simply static, one-time filters; they are highly dynamic, intelligent enforcement mechanisms deeply embedded throughout the entire lifecycle of the orchestration pipeline. To be truly effective, enterprise AI orchestration guardrails must operate flawlessly across three distinct stages of the data journey.

Before a foundational model receives any data whatsoever, the orchestration layer must deploy robust Input Guardrails. During this initial phase, the system should automatically scan the incoming payload to detect and cleanly redact any sensitive information, such as credit card numbers or social security details. It must deeply validate the structural schema to ensure the request is properly formatted and won't cause processing errors. The orchestrator must also confirm tenant authorization, ensuring the requesting user has the right to perform the action. Finally, it must enforce stringent data residency constraints, effectively geofencing data so it does not cross restricted international borders. This immediate intervention prevents unintended data exposure before the AI processing even begins.

Once the input is cleared, the system transitions to Processing Guardrails. During the actual execution phase, the orchestrator must ensure that only explicitly approved models are utilized for specific, mapped tasks—preventing a developer from accidentally sending a simple text classification task to a highly expensive, unapproved reasoning model. The system must also ensure that API rate limits are strictly respected to prevent catastrophic cost overruns or distributed denial-of-service scenarios. Additionally, any context retrieval sources such as vector databases pulling internal documents must be validated to ensure the AI isn't reading files the user doesn't have clearance to see. Should a model provider experience an outage, dynamic fallback logic must activate instantly to route the request to a secure backup model. These active controls drastically reduce both operational downtime and severe compliance risks.

Finally, after the intelligence is generated, the system must engage Output Guardrails. Model outputs cannot be blindly trusted; they must be actively validated for toxicity, bias, or brand-damaging language. The system must also ensure schema correctness, guaranteeing that if a downstream microservice expects a perfectly formatted JSON object, the AI hasn't responded with a conversational paragraph. Furthermore, the output must be scanned to prevent the accidental disclosure of restricted internal information that the model might have hallucinated or inadvertently recalled. By enforcing strict confidence thresholds, post-processing validation ensures that all AI-driven decisions actively meet the enterprise's highest safety standards before ever reaching an end-user or triggering an automated business action. [4]

AI Orchestration Compliance in Regulated Environments

For massive enterprises operating within highly sensitive sectors such as finance, healthcare, insurance, or defense, AI orchestration compliance is entirely non-negotiable. Regulators are no longer accepting "the AI acts like a black box" as a valid legal defense. Modern compliance frameworks increasingly require organizations to maintain absolute data lineage traceability, proving exactly where data originated and how it was manipulated. They demand clear, undeniable human accountability for any automated decisions that affect consumers or patients. Furthermore, regulators expect comprehensive documentation of all model usage alongside rigorous, proactive risk assessment procedures to identify potential algorithmic biases.

Implementing a secure orchestration layer radically simplifies these daunting compliance audits by centralizing all decision logs into a single pane of glass. Instead of forcing an exhausted compliance team to manually trace erratic AI usage across dozens of disconnected microservices and product silos, auditors can simply inspect one unified control plane. They can instantly see the exact lifecycle of a decision from user input to model output. This centralization not only dramatically reduces audit complexity and saves hundreds of hours of engineering time, but it also fundamentally strengthens institutional trust with regulatory bodies and enterprise clients alike.

Designing Secure AI Orchestration Architecture

Architecturally speaking, achieving secure AI orchestration requires highly disciplined, modular layering. You cannot simply hardcode API keys into a web application and call it a day. A mature, enterprise-grade design cleanly separates the ecosystem into distinct, specialized zones.

At the very top is the Application Layer, where the end-users and client interfaces live. Below is the Orchestration Control Plane, which acts as the grand traffic controller and definitive security gateway. Beneath the orchestrator is the Model Execution Layer, housing the actual LLMs and predictive engines, alongside the Data Retrieval Layer, which manages vector databases and enterprise search. Running parallel to all of this is the Policy Engine, constantly evaluating rules, and the Observability and Logging Stack, which records every millisecond of activity.

In this architecture, frontend applications never, under any circumstances, call AI models directly. Every single invocation must pass through the Orchestration Control Plane. This strict physical and logical separation ensures that corporate governance cannot be bypassed—either accidentally or maliciously—by well-meaning product teams who might try embedding model calls directly into their specific frontend services. By design, the orchestration layer becomes the absolute, only legal pathway for AI execution within the entire corporate network.

Common Failure Patterns in AI Orchestration Security

Despite best intentions, enterprise technology teams often encounter highly predictable pitfalls when rolling out AI. One of the most common and painful mistakes is attempting to retrofit security architectures after AI features have already scaled massively in production. Trying to put the genie back in the bottle leads to heavily duplicated prompt logic, inconsistent security controls, and a frustrating developer experience where code breaks unpredictably.

Another frequent failure pattern is an overreliance on external, provider-level safety filters. Assuming that a vendor's default moderation endpoint will protect your highly specific corporate data is incredibly dangerous; external model providers cannot possibly enforce your organization-specific PII policies or regional compliance laws. True governance must live firmly inside your own architecture.

A third, often fatal, mistake is completely ignoring internal misuse. While external hackers are a threat, insider risk—such as employees using misconfigured prompts, gaining inappropriate data access through an AI assistant, or engaging in unauthorized shadow experimentation—can be just as dangerous, if not more so. Strong, holistic AI orchestration governance inherently addresses both sophisticated external threats and subtle internal vulnerabilities simultaneously.

Measuring AI Orchestration Security Effectiveness

In the enterprise, if a security protocol cannot be quantified, it practically does not exist. Security must be highly measurable. Enterprise teams should actively monitor a specific set of telemetry to ensure their orchestration layer is functioning properly. They must track model invocation logs meticulously, breaking them down by specific tenants, business units, and geographic regions to spot anomalies. They should monitor the exact rate of policy enforcement triggers to understand how often users are attempting restricted actions.

Furthermore, tracking output validation failures is critical to determine if a specific model is beginning to hallucinate or degrade quality over time. Teams must also audit how frequently prompt version changes occur to maintain strict quality control over the system's core instructions. Finally, tracking incident response time for AI-specific security alerts is vital. By extensively instrumenting these precise metrics, technical leadership gains crystal-clear visibility into the real-time health of their AI orchestration security posture. These metrics ultimately transform governance from a theoretical compliance exercise into a hardened operational reality.

Change Management and Cultural Alignment

Deploying a robust security architecture is only half the battle; organizational psychology and cultural alignment are equally important. You cannot force a new operational paradigm onto an engineering organization without achieving buy-in. Product teams must deeply understand exactly why centralized orchestration is a mandatory requirement, rather than viewing it as red tape. Engineering teams must willingly adopt shared primitives rather than building rogue, shadow infrastructure. Furthermore, compliance and legal teams must actively collaborate with platform engineering teams incredibly early in the software development lifecycle, rather than waiting until the day before a launch.

To achieve this, internal training and platform documentation should clearly and empathetically communicate the bigger picture. Leadership must explain precisely why direct, hardcoded model calls are strictly prohibited to protect the company. They must demonstrate how automated guardrails actually protect the business and reduce developers' anxiety. Finally, they should show how pristine audit logs directly support winning massive enterprise customer deals by proving security competency. When cross-functional teams truly understand the financial and protective rationale behind secure AI orchestration, cultural resistance drops and platform adoption accelerates dramatically.

The Strategic Advantage of Secure Orchestration

Enterprises that successfully master AI orchestration security do vastly more than simply mitigate downside risk—they accelerate their pace of innovation safely. Because security controls are centralized in one intelligent gateway, the burden is lifted from individual developers. Consequently, new AI-powered product features can ship much faster, as teams no longer must reinvent data masking algorithms for every new microservice.

Furthermore, because the orchestration layer abstracts the underlying AI, external model providers can be swapped out instantly without rewriting millions of lines of application logic. As a result, enterprise customers gain immense confidence in the company's compliance posture, heavily accelerating B2B sales cycles. Lengthy enterprise security reviews become highly streamlined because the architecture is intentionally designed for transparency. Ultimately, security, when correctly embedded into the orchestration layer, becomes a massive business enabler—not a bureaucratic blocker. Over time, this level of governance maturity becomes a formidable competitive differentiator. Software buyers increasingly ask how a vendor's AI is systematically controlled, not just what parlor tricks it can perform. Organizations that can confidently demonstrate structured, architectural oversight earn trust faster and win larger contracts in enterprise markets. [5]

Conclusion

Deploying artificial intelligence without structured, systemic governance introduces silent, compounding risk into the enterprise ecosystem. As intelligent models scale relentlessly across operational workflows, enterprises must urgently shift their mindset from isolated experimentation to architectural control. AI orchestration security provides exactly that necessary control. It elegantly centralizes policy enforcement, standardizes dynamic model routing, embeds unbreakable guardrails deeply into daily workflows, and enables flawless auditability across the entire intelligence stack. By natively integrating governance into the orchestration layer itself, organizations successfully transform AI from a collection of scattered, risky integrations into a highly secure, resilient, and fully enterprise-ready capability. The future of intelligent enterprise systems will not be defined solely by the raw sophistication of the underlying models but rather by the undeniable strength, security, and elegance of the governance frameworks that control them.

Frequently Asked Questions

1. What is AI orchestration security in simple terms?

AI orchestration security is the architectural practice of natively embedding governance, strict policy enforcement, and complete observability into the central orchestration layer that actively manages your AI models and workflows. It acts as an intelligent proxy, ensuring that artificial intelligence operates safely, predictably, and strictly in compliance with all internal enterprise standards and external regulations.

2. How does AI orchestration governance differ from traditional IT governance?

While traditional IT governance focuses on managing server infrastructure, network access, and standard database security, AI orchestration governance is entirely unique. It focuses specifically on the nuanced control of dynamic model invocations, the versioning of natural language prompt logic, the contextual flow of unstructured data into third-party LLMs, and the semantic validation of non-deterministic AI outputs.

3. Why is AI orchestration compliance important for enterprises?

AI orchestration compliance fundamentally ensures that a company's model usage, sensitive data handling, and automated decision-making processes strictly meet rigorous regulatory standards and audit requirements. Without a centralized orchestration gateway, attempting to prove compliance across dozens of fragmented microservices becomes a chaotic, highly risky, and nearly impossible endeavor.

4. What are AI orchestration guardrails?

AI orchestration guardrails are automated, dynamic enforcement mechanisms deeply embedded into the lifecycle of an AI request. They operate before, during, and immediately after a model executes to scan and validate inputs, restrict processing paths based on user roles, and rigorously validate the generated outputs according to strict, pre-coded enterprise safety policies.

5. Can small organizations implement secure AI orchestration?

Yes. Even early-stage startups and smaller technical teams can and should introduce a lightweight orchestration control plane early on. By centralizing model routing and basic logging from day one, smaller teams avoid accumulating massive architectural technical debt, allowing their governance maturity to scale seamlessly alongside their user base.

6. How often should orchestration policies be reviewed?

Security policies in the AI space should be reviewed continuously, especially alongside new foundational model upgrades, shifts in international regulatory laws, and internal product feature expansions. However, conducting formal, comprehensive governance reviews on a quarterly basis serves as a highly effective and standard baseline for modern enterprise technology teams.

AI Orchestration Security & Governance: A Framework for Enterprise Teams